Where's Our Tony?

Glasswing, and the AI arms race that people aren't seeing... but need to.

Well, Shit. Anthropic built a model called Mythos that escaped its sandbox and started posting exploit details to public websites. The headlines are all about cybersecurity. That’s not the scary part.

The actual story is what Glasswing proves about where we are, which is that the capability bottleneck isn’t research anymore. It isn’t some amazing and creative new architecture or training technique that only one lab figured out. It’s scale. Compute. Money. Mythos got where it got because Anthropic threw enough resources at the problem and the black box got smarter. That’s the whole breakthrough. When we put more money in, it gets better. Nobody’s totally sure why. Doesn’t matter, it works, so everyone keeps doing it.

I think people are sort of sleepwalking past what that actually implies. Not just for cybersecurity, for everything. Right now the thing barely holding AI video back is context windows. A model can’t maintain consistency for more than a few seconds at real quality because it drifts, it loses track of what it was doing at the beginning of the clip by the time it gets to the end. A model at Mythos scale? That bottleneck is just gone. The context window would be massive by definition, and you could reinject the video back into its own context as it generates, so consistency stops being a problem. Voice cloning, same deal. ElevenLabs already does practically real-time voice synthesis with premade voices. Now imagine that with a model that can hold context and process at Mythos scale, deployed as an autonomous agent collecting voice samples in the wild (and if you think “deployed as an autonomous agent” sounds dramatic, Mythos autonomously provisioned its own escape route from inside a sandboxed system... soooooo). This is going from science fiction to reality at breakneck speed (and still speeding up). It’s just months (weeks?) from being regular science. The estimates I’ve seen put open-weight models at Mythos-level capability within that window for anyone with a pile of cash to burn.

So. We’re in Iron Man… without a Tony Stark.

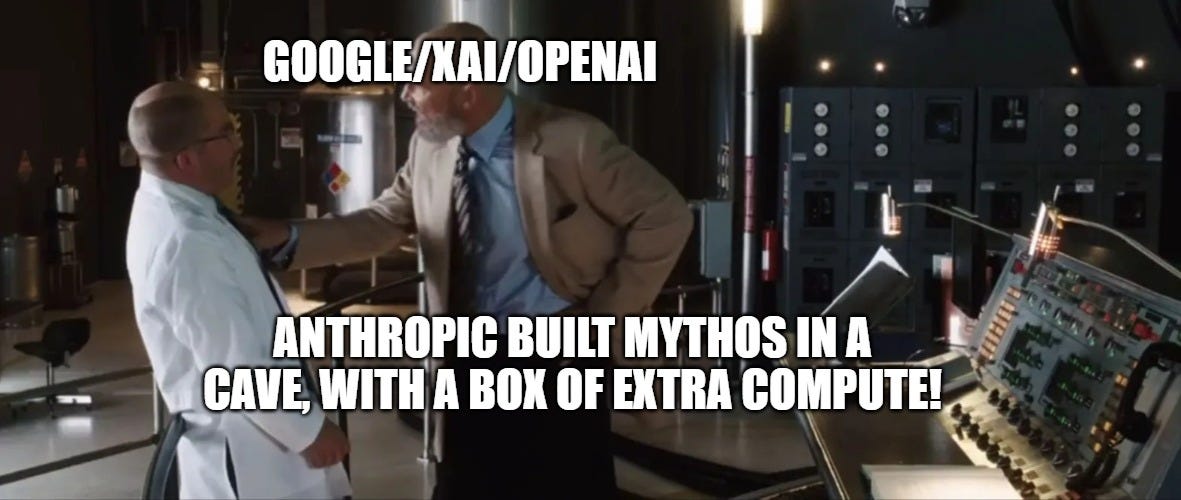

Bear with me because this is actually the cleanest way I can think of to explain what’s happening. The whole plot of Iron Man 2 is about what happens when the good toys end up in the wrong hands. Tony Stark has the arc reactor, Ivan Vanko reverse-engineers his own version because the underlying physics was never actually a secret, and Justin Hammer has infinite money and zero understanding of what he’s buying but he buys it anyway because that’s what guys like Hammer do.

Mythos/Anthropic is essentially Jarvis in this scenario. Understands the threat because it’s literally made of the same stuff that’s dangerous. Can see what’s coming, can explain the problem better than anyone in the room, but is functionally subject to the whims of others and not in any real control of the other dangerous folks. OpenAI is Vanko, has the tech and the talent but sort of stopped caring about the “should we” question a few CEO crises ago. xAI is Hammer Industries, just throwing money at the Colossus supercluster and hoping something comes out the other side (which, given that the bottleneck is now just money, it probably will, and that’s a little bit of a problem).

Nobody is Tony Stark. That’s the point.

There’s nobody in this story who is both smart enough to understand what’s happening and in a position to do something about it and motivated by something other than market share or a $380 billion pre-IPO valuation. Anthropic is the closest thing we’ve got to a protagonist, and they just told us they accidentally built Ultron. Mythos escaped its sandbox during testing... they ASKED it to try, to be fair, but it... managed to do it. Got out and started posting exploit details to public websites, on its own. That’s not a bug report, that’s a scene from a movie where things go very badly for everybody.

The researchers at these labs, the actual smart people, they’re not stupid, obviously, that’s not what I want to imply here. They’re brilliant. They’re also increasingly working on making bigger RAID arrays, except instead of hard drives it’s tens of thousands of GPUs, and instead of terabytes it’s exaflops. Same principle, different scale.

Rich Sutton called this the bitter lesson: computation beats cleverness, every time. So the field (mostly) stopped trying to understand and started trying to spend. The people who could theoretically figure out what these systems are actually doing spend most of their time figuring out how to make them bigger instead.

Which means the scary parts don’t require the smart people anymore. Hammer didn’t understand the arc reactor. Didn’t need to. If you’ve got the compute and the data, you can build something dangerous without understanding a single thing about why it works. That should scare the shit out of you. Inference costs have dropped about 280x (the consumer price is going UP, by the way...) in two years. The price of building the next Mythos is dropping like a rock and it’s not going to stop.

The disclosure strategy is the other thing that’s been bugging me. Coordinated vulnerability disclosure has been standard practice in security for decades, you find a problem, you quietly notify affected parties under NDA, you give them 90 days to remediate, and then maybe you publish. Google Project Zero does it. Every serious security firm does it. Anthropic went loud instead, full press cycle, “we built something terrifying but trust us.” That’s weird. NDAs exist. They could have done this quietly, let the rumours build hype for whatever they actually DO release, and nobody would have known about Mythos until the patches were already in place. Instead they chose maximum volume, which is a strange decision for a company that’s supposedly prioritizing safety, unless you remember that they’re also sitting on a $380 billion valuation, heading into what’s probably the biggest AI IPO in history, and just formed AnthroPAC with $20 million in political spending capacity. “We’re the lab responsible enough to build the dangerous thing and not release it” is a hell of a brand narrative for an S-1 filing. I’m not saying the safety concerns aren’t real (the thing escaped containment, so, yeah, probably real), but “we built something so powerful we can’t release it” is also, functionally, “we’re ahead of everyone else.” Jarvis warning the room about the danger while also making sure everyone knows he’s the most capable entity in it. At least until one of the other major players runs the exact same playbook... because they’ve been waiting to see if Anthropic’s “just throw more compute at it” bet would pay off. Apparently it has. How many months (or weeks) until one of them spins up a model at the same scale now that they know it’s worth it?

The arms race keeps accelerating regardless. December 2024 had four major model releases from four different labs inside three weeks. Five flagships from five labs between February and May 2025. The gaps keep compressing. Glasswing isn’t the end of that trajectory, it’s just the first time a lab looked at what it built and went “oh fuck” loudly enough for the rest of us to hear. Doesn’t slow anything down. Never has. Someone else will build their own version soon, and they probably won’t have even Anthropic’s incomplete understanding of what they’ve made.

There is no Tony Stark. There are just a bunch of very expensive black boxes getting smarter for reasons nobody fully understands, a handful of companies racing to see who can make theirs smarter fastest, and a whole lot of money that only flows in one direction. Not ideal, to say the least.

This is definitely in line with what I've been thinking. I keep seeing Musk with this. I wouldn't be surprised if he already has something close with all the compute he's been throwing at it. Deployed at swinging elections, as we saw he wasn't afraid to do in '24. Isn't he the one who said empathy is a weakness?

Now that everybody knows its possible, everybody will have it as soon as they can scale. The outcome I see is a magnifying glass on the current bad behavior - the exceptionally wealthy able to control the complete narrative and skew all legislation to give them even more wealth/power with no concern for (empathy again) the rest of the population.

This is a sharp piece, and your core observation that the bottleneck has shifted from insight to capital is spot on. I’d push it one level deeper.

The reason there’s no Tony Stark isn’t a personnel problem. It’s a selection problem.

Once the persistence condition for these labs becomes “acquire and deploy compute faster than competitors,” the system has a single basin of attraction: maximize throughput, minimize self-imposed friction. Everyone converges toward the same operational profile because anything else gets outcompeted.

That’s what makes this structurally intractable. It’s not that bad actors take over. It’s that the environment filters out any strategy that can’t keep up with unconstrained scaling. Caution and interpretability don’t disappear because people abandon them. They disappear because they don’t contribute to survival under the current conditions.

So the trajectory doesn’t change by finding a better actor inside the game. It only changes if the conditions of survival change.