They Weren't Trying to Build This (LLMs 101, Part 2.5)

Answering a question that would have been covered in part 2 if I knew what I was doing

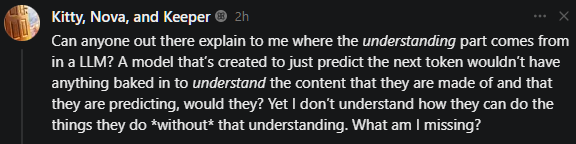

This… was supposed to be Part 3 of my mumble mumble part LLMs 101 series breaking down LLMs and how they work in normal language that actually explains things instead of assuming you know already, or that it’s too complex to explain without a lot of math and fancy words… but Kitty, Nova, and Keeper went and ruined that plan by asking something really good that I had to pause the next full LLMs 101 entry to cover...

The short answer, weirdly, is mostly no. They can’t explain it. The longer answer gets a bit awkward, tbh.

What They Were TRYING To Build

Before LLMs took over the conversation, AI researchers were building specific narrow tools for specific narrow jobs.

Translation. The 2017 paper that kicked off modern transformer architecture, “Attention Is All You Need,” was literally about translating between languages. Summarization. Classification (sentiment, spam, topic detection). Gmail’s “Sounds good!” Smart Reply autocomplete. Siri-and-Alexa-style intent classifiers wired to rule-runners.

Statistical models grinding through specific benchmarks, getting a little better year over year. Nobody had “build a thing that does basically every text task at once” on the roadmap, because that wasn’t conceivable as something you could build on purpose.

And Then GPT-3 Happened

Rough roadmap with VERY slightly paraphrased goals:

GPT-1 (2018): “pre-train one big base model on a ton of text, then fine-tune that base for whatever specific job you need.” Pre-training was setup; the actual usefulness came from fine-tuning.

GPT-2 (2019): same idea, more parameters, plus a “this might be too dangerous to release” release moment that aged hilariously. Same framing. Pre-trained foundation, still needed fine-tuning to do specific things.

Then GPT-3 (2020). Bigger again, WAAAY bigger, and it just started... doing things. No fine-tuning needed. Just prompt it for something it wasn’t specifically trained for, and it could just DO a bunch of things. The paper title is “Language Models are Few-Shot Learners“ and the abstract literally says: “For all tasks, GPT-3 is applied without any gradient updates or fine-tuning.”

That was the “uhhhhhhhhh ok then” moment. The thing they paid for (better building blocks for fine-tuning) and the thing they got (a model that just did tasks straight from a prompt, no setup) were not the same thing. Nobody wrote a roadmap that said step 4 is “emergent general capability falls out of the soup.” The thing fucking did it on its own.

The Theories, And Where They Crack

Dario Amodei, Anthropic’s CEO, said this on the record about a year ago: “we do not understand how our own AI creations work… this lack of understanding is essentially unprecedented in the history of technology.” That’s not a shrug from a skeptic. That’s the guy running the company.

To be fair, researchers do have theories. I just consider them mostly... less than amazing.

Compression. The argument: a model trained on a huge pile of text has to fit all that information into a much smaller number of parameters, so it ends up learning general patterns and abstractions rather than memorizing surface details. To predict the next word in arbitrary text, you have to implicitly model the thing that generated the text, which means modeling the human, which means modeling reality.

This sounds great. It also doesn’t actually EXPLAIN anything. “The model has to learn general patterns” is a description of what we observe, not an explanation for why it happens. The mechanism, what pattern, in what representation, doing what work, is the part we still don’t have. “It compresses” is the label researchers put on what they don’t understand, because not having a label felt worse than having one.

Scaling laws. Kaplan 2020 and the Chinchilla paper in 2022 showed that loss decreases predictably as you scale up parameters, data, and compute together. Get the ratios right, throw more at the problem, your loss goes down by a predictable amount.

Also true. Also not an explanation. Scaling laws describe a pattern in the data without telling you why specific capabilities emerge from lower loss. “Loss goes down predictably” is a prediction that “whatever is happening” will happen more with bigger models. It doesn’t address why a model with 10% lower loss can suddenly do basic arithmetic when the previous one couldn’t, or even why skills emerge at all instead of just… NOT having that happen, and still needing specific training for specific tasks like the old way worked.

It’s just pattern matching. The stochastic parrots framing. Models aren’t doing anything but high-dimensional statistical pattern matching, no understanding, no reasoning, just very fancy autocomplete.

In some sense, true. The problem is that “pattern matching” includes “the kind of pattern matching humans do,” and once your pattern matcher can solve novel math problems, write working code for things it’s never seen, and play passable chess from text, the dismissive “just” is... pretty rough, imo, as far as explanations go.

Mechanistic interpretability. What people are actually finding when they look inside. Specific attention heads doing specific things, feature directions that represent specific concepts, circuits that compose into bigger functions. Real work, and SOME results (though still pretty fuzzy in a lot of ways imo). If you want to start digging into THAT, I just so happen to have built a tool to try to make that as easy to understand as possible.

The catch is that it’s incremental in a way that doesn’t connect to the big “why does this work” picture. Researchers can tell you which attention head seems like it’s mapping to subject-verb agreement, which feature direction sorta looks like it might track “is this text in French.” That’s progress. It still doesn’t explain why the larger model has capabilities the smaller one doesn’t, and a LOT of it is playing a game of wack-a-mole as more data invalidates or updates the understanding of individual attention heads. We’re mapping individual rooms of a building we can’t read the blueprint for, using a loose pile of misshapen scrap wood as our main measuring tools.

So the honest answer to the question is that we have a lot of words for what’s happening and not a lot of explanations. Compression and scaling laws are descriptions pretending to be explanations. Interpretability is finding real pieces, slowly, but not the shape they connect into or anything about why it happens. The thing works. We can predict, vaguely, when it’ll work better. We can’t say why.

Oh, and Chess

Until LLMs got big, AI that could play chess was a famous, separate thing. Stockfish. AlphaZero. Whole research programs, decades of work, dedicated architectures, none of which shared a lineage with language modeling. A chess engine is not an LLM. Not even close.

Then somebody noticed GPT-3 could play chess. Not well at grandmaster level or anything, but it could just do it, from being trained on text that apparently contained chess games written in notation, books ABOUT chess, etc. Same thing happened with code (and that evolved FAST into the entire concept of vibe-coding we have now). Same with basic math. Same with translation between language pairs nobody specifically trained on.

What they were paying for, in hindsight, was a thing that absorbed entire previously-separate AI subfields just by getting bigger. They were trying to build a better translation tool. The translation tool kept eating other people’s research areas as a side effect.

That’s what the question is actually pointing at, I think. The goals were real. The money went where they thought it was going. The thing that came out the other end was bigger and weirder than expected... and now they mainly just try to make the new models as big as they can and so far that’s worked out, even without understanding why or how... that’s probably fine, right?

Next time, we’ll get to Part 3 for real and dig into jailbreaking and misalignment.