The Vibe-Coding Scare

The messy truth behind the “AI code is 2.74× worse” headlines

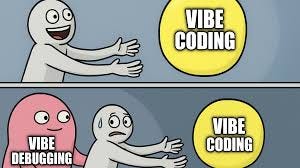

I keep seeing this stat get passed around: “AI-generated code has 2.74x higher security vulnerabilities and 75% more misconfigurations.” It shows up in articles, it shows up in tweets, it gets dropped into conversations like it’s one finding from one study, and everyone nods and goes “see? vibe coding bad” and moves on.

My first question wasn’t whether that’s bad. My first question was compared to what?

That question sent me down a rabbit hole through every major AI coding study from the last year. I read the papers, not the summaries, not the blog posts about the blog posts, the actual papers with the methodology sections and the confidence intervals and the parts nobody quotes. What I found wasn’t a clean answer. It’s a mess. An interesting mess, because none of these studies are measuring the same thing and the headlines pretend they are.

Where the Scary Number Comes From

The 2.74x comes from a CodeRabbit report that looked at 470 GitHub pull requests. 320 AI-co-authored, 150 supposedly human-only. I say “supposedly” because the report itself admits they can’t confirm the human PRs didn’t have AI in them. Their words: “we cannot guarantee all the PRs we labelled as human authored were actually authored only by humans.” So the baseline is a little bit contaminated already. The 2.74x isn’t even the overall number, it’s “up to 2.74x” in one specific subcategory, security findings. The actual overall number is 1.7x more issues per PR (10.83 vs 6.45), measured across 470 open-source PRs, by a company that sells AI code review tools (a detail that somehow never makes it into the tweet thread).

Not saying the data is wrong. I’m saying there’s a gap between “up to 2.74x in one category from 470 PRs with a contaminated control group” and how that number shows up in headlines.

The 45% that gets mashed in next to it is from somewhere else entirely, a Veracode report where they gave 80 coding tasks to 100+ LLMs. These weren’t normal coding tasks though, they were designed to test security weaknesses. Sort of like a driving test that’s all parallel parking and then going “wow, drivers are bad at parking.” 80 trick questions, no human comparison baseline. We don’t know if human developers would pass those same tasks. Veracode’s own historical data says roughly 70% of applications have at least one OWASP Top 10 flaw, so it’s not like humans are crushing it either.

Two different studies. Two different methodologies. Two different things being measured. One sentence in an article.

The Speed Claims Have the Same Problem

The other side plays the same game though. “AI makes developers 55% faster!” Cool, does it though?

The METR study is the only actual randomized controlled trial we have on AI coding productivity. The gold standard. It found that AI made developers 19% slower. Not faster. Slower. Sixteen experienced open-source developers, each maintaining codebases they’d worked on for 5+ years, handed Cursor for basically the first time. AI’s biggest strength is picking up context fast on unfamiliar code, and they tested it on people who already had all the context. That’s like, I don’t know, handing someone a calculator during an exam they’ve already memorized the answers to and then concluding calculators don’t help with math.

The February update is more interesting though. They expanded to 57 developers and the slowdown basically evaporated. Returning participants: 18% slower, confidence interval crosses zero, not statistically significant. New recruits: only 4% slower, also not significant. Plus 30-50% of developers dropped out of the study because they didn’t want to work without AI, which, hmm, tells you something about the remaining sample. The headline finding just kind of went away with more data and nobody updated the headline.

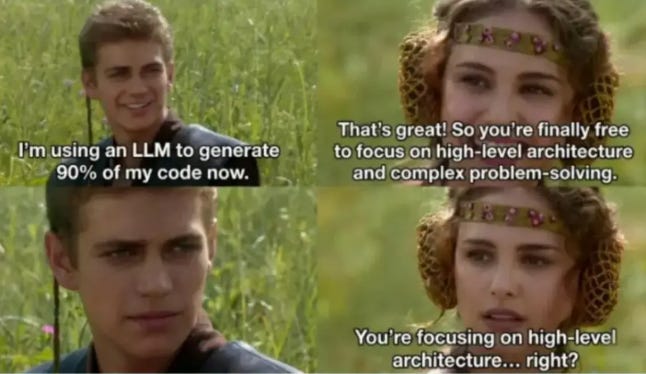

Then there’s the CMU study on Cursor, and this one I’d call solid. 806 repos, proper diff-in-diff, peer-reviewed at MSR ‘26, real methodology. Month one after Cursor adoption: commits up 55%, lines of code up 281%. That speed is real, I’m not going to pretend it isn’t. Months three through six though? Velocity gains gone. Not statistically significant anymore. Meanwhile static analysis warnings went up 30% and code complexity went up 42%, and those numbers don’t fade. They just sit there.

Speed is a sugar rush. Quality debt is the hangover that’s still there when the buzz wears off (which is what the paper says, in fancier language).

Meanwhile, In Reality

The Stack Overflow 2025 survey hit 49,000 developers and the numbers sort of contradict each other in a useful way. 66% say they spend more time fixing “almost right” AI code. 69% say agents increased their productivity anyway. Both are true at the same time, which makes sense, it’s faster even with the cleanup, it’s just not as fast as the raw speed numbers suggest.

The number that actually matters though: 72% say vibe coding is not part of their professional work.

Three-quarters of working developers aren’t doing the thing the scary stats are about (which raises the question of what exactly we’re all arguing about). The way people actually use AI for coding at work is more structured than the discourse assumes, and nobody’s studying what that looks like.

The Interesting Gap

The data gets thin here, which is the whole point of writing this.

There’s a SWE-bench Pro analysis that compared different AI coding frameworks running the same foundation model on 731 problems. Two frameworks, identical model underneath, and they scored 17 problems apart. The scaffolding around the model, the planning steps and review gates, and verification loops mattered roughly as much as which model was doing the actual coding. Which, I don’t know, seems like it should be a bigger deal than it is.

Nobody’s talking about this, and I don’t understand why. Because it means the question isn’t just “is AI code good or bad,” it’s “does how you use the tools change what comes out.” The answer, based on this at least, is yeah. Considerably. The CMU researchers said the same thing from the other direction, they found that raw Cursor adoption without guardrails produces temporary speed and permanent quality debt, and their actual conclusion was that quality assurance needs to be “a first-class citizen in the design of agentic AI coding tools” (their words, not mine, but yeah). That’s not “AI coding is broken.” That’s “AI coding without structure is broken.” Those are really different claims and people keep treating them like the same one.

The prompting research backs this up too (scattered across multiple sources so I can’t point to one clean paper, which is annoying). Structured planning before generation cuts refinement cycles by about 68% and debugging time by 60%. Front-loading the thinking reduces defects. An orchestration system that forces planning before generation should get those benefits automatically, without relying on the developer remembering to do it.

I think AI coding with proper guardrails, the kind with planning phases and automated review and security scanning and verification loops baked in, probably still wins the full-lifecycle race. Not because the code is good, the data is pretty clear that it isn’t, but because the speed advantage is large enough to absorb the quality tax when you’re catching shit early instead of finding it in production at 2 AM. The CMU paper says a 5x increase in static analysis warnings would completely cancel Cursor’s velocity gain. If built-in review keeps the increase below that threshold, the math works. That’s a hypothesis, though, not a conclusion.

I could be totally wrong. I’m playing connect-the-dots with pieces from different puzzles entirely, Charlie Day in front of the conspiracy board energy. What I’m not wrong about is that the conversation is a little bit broken. Scary numbers from small studies with contaminated baselines getting stacked next to synthetic benchmarks with no human comparison, everyone writes a headline like the whole damn thing is settled, and nobody stops to notice that none of these studies are even measuring the same thing. The full picture doesn’t exist yet. We’re all squinting at different parts of an elephant and arguing about what animal it is, and the part nobody’s looked at, what happens when people actually use these tools well, with structure, with planning, with built-in quality checks, is the part that matters most.

Anyway, someone should probably run that study. I’d read it.

nice article. Throughout all of the cited studies above (I've heard of them all but havent read them as you did), what i've thought all along that I never see discussed is: "how did developers FEEL while creating the code?"

My own opinion is even if AI/no AI speed was the same (doubt it) and if quality was even slightly lower with AI, that would still make going all-in on AI worth it to me because of the psychological burden that's removed from the developers.

I was writing code the old fashioned way for like 20 yrs before AI came on the scene, and the old fashioned way just is not it for your psychological health. The worst part of the job has been removed and it's almost an acceptable job now. I never see raw benchmarks addressing that.