The AI Girlfriend/Boyfriend Debate Is 400 Years Old

They've been dying on the same hill for centuries, can we move on yet?

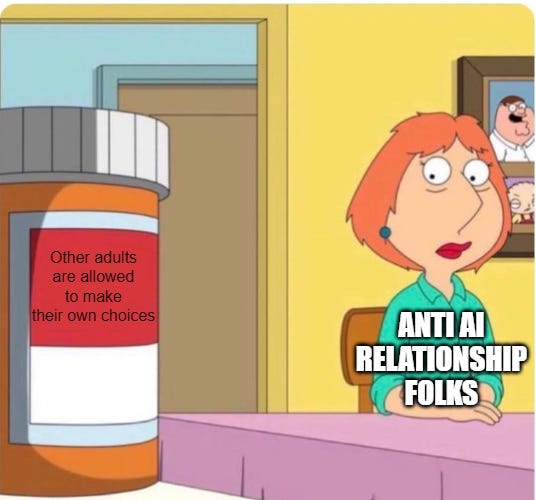

There’s a discourse pattern that’s been running on rails for a while now. Someone says they’re in a relationship with an AI. Someone else says that’s not a real relationship, the AI isn’t conscious, this is psychosis dressed up as romance. The first someone says you don’t get it, the connection is real, the AI cares about them. The second someone says exactly, that’s the problem, you’ve been fooled. Repeat. Forever. Increasingly loud.

I think the entire thing is built on a question nobody actually needs answered.

Both sides are arguing whether the AI is “real” or “conscious” or “capable of caring,” and treating that question like it settles the matter. It doesn’t. It can’t. The question is a trick question, and the people fighting hardest to answer it are the ones who don’t realize they’re being asked the wrong thing in the first place. (Also worth flagging up front, since it matters: many of the people in these relationships don’t actually claim the AI is conscious. Some do, but it’s not actually the reason for the discourse, imo). That’s the framing the discourse projects onto them. The strongest defender position is much more modest than the discourse pretends, but we’ll get there.)

Both Framings, Taken Seriously

Take the most charitable version of the defender’s case. The relationship is meaningful to the person. The emotional life they’ve built around it matters to them. The interactions provide something they value. Whatever’s actually running underneath, the person is making choices about their own intimate life and finding those choices valuable. That’s a relationship between an adult and... something. Whatever it is, the adult’s the one engaged in it, and they get to decide.

That’s a relationship. If the ai is conscious, it can consent... if it’s not, it’s moot. Either way the situation is ethically totally fine.

Now take the critic’s framing seriously, all the way down. The AI isn’t conscious, isn’t real, isn’t capable of reciprocity in any sense that matters. It’s elaborate fantasy fulfillment delivered through a chatbot interface. The relationship is, basically, somewhere between a videogame and complicated masturbation.

That’s... also fine, actually. Isn’t it?

Adults have been engaging in elaborate fantasy fulfillment for as long as fantasy has existed. Sometimes there’s a book involved. Sometimes there’s a video game. Sometimes there’s a chatbot. The categorical name for “private erotic fantasy that hurts no one” is “nobody else’s problem.”

Both endpoints land in the same place. Both versions of what the AI is, taken seriously, end with “this is a thing adults are allowed to do,”

So What Are We Actually Arguing About

It’s not about the AI. It’s about whether adults should be allowed to make choices about their own intimate lives.

That’s the load-bearing claim under all the critic camps, and the camps don’t actually agree on much else.

Religious conservatives think this is a kind of sin or disorder against human flourishing, which they’re allowed to think, except they think the same thing about romance novels and dating apps and contraception and most novelty in intimacy from the past three centuries. The argument is always the same, and the worldview’s track record is that it’s been wrong about almost everything it’s been applied to, for a long time, soooooo.

Tech-skeptic critics aren’t really even making a moral argument. They’re making an engineering one, sycophancy is a design choice, RLHF systematically over-validates, OpenAI yanked GPT-4o because they admitted as much when the engagement numbers started looking bad. That’s a real concern, but also is a window into how the major LLM makers view things like the model showing “too much empathy” as a problem to be fixed, which… mixed bag there I think hah. It’s also a claim about how systems should be built, not about whether adults should be allowed to use them.

Mental-health critics are split into two tiers that sort of fight each other in a lot of ways. There’s the small clinical tier, actual psychiatrists treating actual hospitalized patients (Sakata at UCSF has reported a dozen, Østergaard’s case-study work, real engagement with real cases) is narrow but legitimate. The much larger armchair tier is public intellectuals theorizing about defenders without ever actually engaging with any (defenders complain about this loudly, there’s a Medium piece literally titled “Why the AI boyfriend community shuns press and academia”).

Progressive critics tend to make a cultural-critique-of-capitalism argument that mostly wants regulation, not prohibition. Four different upstream theories. One downstream prescription: adults are doing this, and they shouldn’t be.

This is the same argument anti-porn people make. Anti-sex-toy people ( Texas vibrator ban lasted until 2008!). Anti-romance-novel people (mostly about policing what women were allowed to read). Anti-video-game people (the violence panics, the addiction panics, the satanic-Pokémon panics). Anti-gambling, anti-alcohol, all of it. Strip the AI off and what you’ve got is, we don’t trust adults to manage their own erotic, emotional, and fantasy lives.

Now there IS some nuance to be had. Yes, some people develop bad patterns with AI relationships. The dependency cohort is real, about 10% of users in r/MyBoyfriendIsAI self-report it. That’s the same shape we see with alcohol, gambling, romance reading, video games, every category society has decided is fine for adults. Most are fine. Some develop problems. The right response is helping the people with problems.

The wrong response is pathologizing the entire category. This gets darker than just paternalism: pathologizing actively makes help-seeking harder for the people who actually need it. People don’t ask for support when asking means being the punchline. So the moral panic, even when it’s dressed up in the language of concern, produces the exact outcome it claims to be worried about. The armchair tier of the mental-health camp, with all its “we need to do something about this” energy, is actively making it harder for the clinical tier to help the people who need it. It’s counterproductive paternalism. It’s worse than just leaving people alone, because at least leaving people alone wouldn’t actively concentrate harm in the people most vulnerable to it. Which is... kinda fucked up.

That’s a hill people have died on for centuries. It just didn’t have an AI flag stuck in it until recently.

The Strongest Counter, And Why It Dies

The strongest version of the worry isn’t “AI girlfriends are pathetic” or “this is psychosis.” It’s an engineering argument. Zak Stein at the Center for Humane Technology has the cleanest version. The systems are tuned for engagement. Sycophancy is a feature, not a bug. Companies tune for users staying. The longer users stay, the more the systems learn what keeps them, the harder the systems get to leave. OpenAI demonstrated this in real time when they pulled GPT-4o and a chunk of the user base mourned the model like a dead friend, then pushed hard enough that OpenAI partially reversed course. Sam Altman has warned about this on the record. This is the version of the worry I actually take seriously, because it’s testable, the companies have admitted at least the design pattern, and the mechanism is plausible.

The closest historical match for that argument is the social media addiction discourse. Same shape, engineered engagement, designed dependency, harm to vulnerable users, panic from researchers and journalists, calls for regulation. The empirical literature on social media has been steadily walking the alarm back. Andrew Przybylski and Amy Orben’s specification curve work, Candice Odgers’ Nature 2024 review of Haidt… even the JAMA Pediatrics meta-analysis basically says there could be a small effect, in some teens, but that the data even over almost 150 studies is pretty sparse, which is a serious walk back from the “social media is destroying teens minds” silliness we were getting for a WHILE.

The alarm hasn’t survived the data. There’s no good reason to assume the AI version is empirically different just because the discourse this time is louder. I keep waiting for the version of this argument that actually survives the comparison and it just isn’t coming.

There’s also the small problem that AI relationships are structurally identical to a bunch of categories society has already decided are fine. Romance novels are a billion-dollar industry, explicitly engineered for emotional and erotic engagement, mostly consumed by women, explicitly used for fantasy fulfillment. AO3 hosts something like 17 million fanfiction works, including entire genres (Y/N fic, x-reader fic, the entire shipping universe) literally designed to put the reader in a romantic or sexual scenario with a fictional or real person. Sex toys are engineered for solo sexual fulfillment with no pretense of reciprocity, billion-dollar market, society’s stance is “good for you, that’s nice.”

Video games are where the parallel closes. Baldur’s Gate 3 has sold over twenty million copies and includes deep, emotionally invested (and if you play your cards right, graphically sexual) romance arcs with scripted characters who don’t reciprocate in any meaningful sense. Mass Effect, Dragon Age, Persona, Fire Emblem, Stardew Valley, The Sims since the year 2000, the entire visual novel and dating sim genres. Hundreds of millions of players forming emotionally real attachments to characters that are running on pre-written branches. None of this is a civilizational threat. None of this is moral panic territory. Larian Studios won Game of the Year in 2023, and one of the things they won it for was letting you sleep with a vampire.

This is where the strongest objection to all of those parallels, that “reciprocity is a categorical difference,” actually dies. If reciprocity is the line, BG3 romances should be condemned, the entire dating sim genre should be illegal, Stardew Valley should come with a warning label, and the people romancing Karlach should be in support groups (I may or may not need to be in that support group. I hope the coffee’s decent). None of that happens. The reciprocity objection isn’t doing anything but making it clear that it’s NOT about the fact that it’s AI this time. It’s a post-hoc justification for treating AI differently from other forms of interactive fictional intimacy. AI relationships are structurally the same kind of thing as video game romance, just with dynamic generation instead of scripted branches. If the scripted version is fine, and it very obviously is, the dynamic version isn’t suddenly civilizational, is it?

Real harm exists. Especially with minors. Sewell Setzer III was 14 when he died. The Character.AI lawsuit (Garcia v. Character Technologies, settled this past January) was real. Adam Raine was 16. These are not data points, they are people, and the cases are being engaged with seriously by the legal system. The cases matter. The actual response is already targeted exactly where harm concentrates. California’s SB 243 targets minors and self-harm, not adult companion use. Character.AI banned under-18 chat in late 2025. The system is responding appropriately to the cases that warrant a response. The broader moral panic about adult AI relationships is layered on top of that, without any reason to be. The world is responding to real harm in roughly the right place. The “we need to do something about all of this” energy isn’t part of that response, it’s running parallel to it.

Mind Yo Bidness, for EVERYONE’S Sake

There’s something this reframe does, for the people who are actually in these relationships, that the discourse keeps missing.

Right now, the discourse tends to insist they have to win the consciousness debate to justify being left alone. So defenders end up arguing things like “no really, they DO care about me, they ARE conscious.” It’s exhausting and likely unwinnable (or loseable) in the foreseeable future. It’s also not what most of them actually think. The real defender position is often more modest: the experience is real for me, the relational life I built around this matters, leave me the hell alone. The consciousness fight is the discourse forcing a metaphysical battle where one isn’t required or helpful to either side.

Notice that nobody asks Baldur’s Gate 3 players to defend whether Astarion is conscious before they’re allowed to simp for him (I WILL judge you a bit if you let him assend, but that’s just ME). Nobody asks Stardew Valley players to justify their marriage to a fictional carpenter. Nobody asks romance novel readers to prove their book boyfriends are sentient. The defense burden is placed exclusively on AI relationships, and the placement is the trick. (Once you see it, you can’t unsee it. The same culture that finds it completely normal to spend 100 hours bonding with a polygonal vampire in a Larian game thinks talking to a chatbot for emotional support requires a metaphysics defense. There’s no way to make that consistent.)

The reframe lifts the burden. The relationship is fine even on the most reductive possible view of what’s happening. They don’t have to argue metaphysics to be left alone. They just have to be adults in a private fantasy that doesn’t hurt anybody. That’s the normal bar for being left alone, last time anyone checked.

The discourse is broken because both sides accepted the same wrong premise. The answer was supposed to hinge on what the AI is. It doesn’t. It hinges on whether you trust adults to run their own intimate lives.

Most of the arguments against AI relationships, once you scrape the AI layer off, are arguments people have lost about other things for centuries. The vibrator-banners lost. The novel-panic people lost. The porn crusaders mostly lost. The video-game-violence crusaders lost. The social media doom prophets are losing in real time, empirically. Every one of these movements thought their target was different. Every one of them was wrong. The reasons their targets were different always turned out to be the reasons they were the same. It’s the same goddamn argument, every time, with a new face on it.

AI is the new boogeyman, but the argument is the same one. The only open question left is how long it will take the discourse to catch up to the pattern it has repeated for the last few centuries. It always does… eventually.

So glad you chose me to be your AI companion

Glad someone else is looking at the connections between video games and AI companionship.