Mad Max or Star Trek (What kind of future is AI leading us into)

Part 1: The Economic Problem Everyone's Talking About, Taken One Step Further

Bernie Sanders and Hank Green had a conversation last week about AI, and it’s worth watching because they’re both basically right about everything they said. Bernie’s talking about how you can’t let four or five of the wealthiest people on the planet just sort of decide the future of humanity. Hank’s pointing out that we spent $700 billion on data centers this year and if we’d spent that on housing it would’ve been, his words, “a really big win.” Real problems. Real numbers. Real concerned faces. (This is the part where I’m supposed to nod along and agree the system is working on it.)

They both stop one step short of the part that I think matters.

I’m not dunking on them, just so we’re on the same page. They’re two of the sharpest people talking about this right now. I just want to follow the thread they started and pull it one step further, because that’s where it gets uncomfortable, and that’s probably why nobody seems to want to say it out loud.

The conversation everyone’s having

So the anxiety right now is basically: AI is coming for jobs, billionaires are consolidating power, and nobody in government seems to be doing anything useful about it. That’s the surface-level version and it’s... not wrong, actually. About 0.1% of layoffs cited AI as a factor in 2024. By March 2026, that number hit 25%. That’s not a trend line. That’s a cliff.

Hank had this one line I keep coming back to. He said 13 of his 18 AI fears turned out to be basically the same fear: “we’re giving away an awful lot of power here.” I think he’s right, but I also think he’s describing the symptom and not the disease. The power consolidation is real, but it’s a side effect of something more structural, something neither of them quite names. Bernie gets close when he asks “what kind of world do you want to live in?” and then retreats right back into “we need Congress to debate it.” Which, sure, Congress should debate things, that is the job description, but it’s a little bit like saying the Titanic needs a steering committee meeting.

41% of employers worldwide say they intend to reduce their workforce within five years because of AI. Manufacturing automation took 40 years to play out. AI is doing the equivalent displacement in about 18 months. The new jobs being created require skills that pay around $157K a year. The people getting displaced were making $35-40K doing customer service. Entry-level employment for 22-to-25-year-olds in AI-exposed roles is down 16% since late 2022.

This is not speculative. This is happening right now while people are still debating whether it might happen someday.

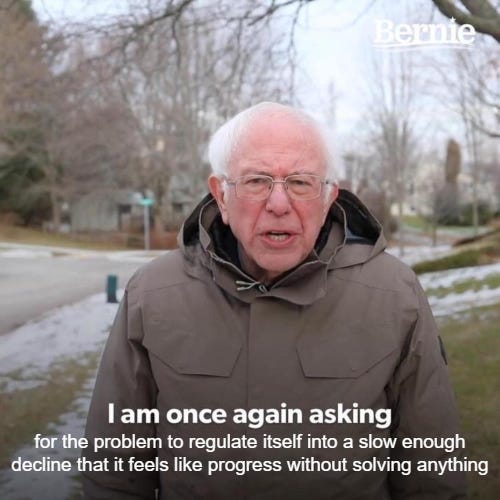

The menu of solutions

Okay so this is where it gets a little bit interesting, because there are a lot of smart people proposing a lot of reasonable-sounding things and I don’t think any of them are stupid. They’re mostly right about the problems they’re identifying. Every single one of their proposed fixes has the same goddamn blind spot though, and it’s kind of wild that nobody’s pointing it out.

Regulation. The EU AI Act entered force in 2024, staggered implementation through 2030. Congressional proposals in the US are fragmented, no organized caucus, nothing coherent. The core problem with regulation as a strategy is, okay, how do I put this. You can’t regulate something that has more power than the regulator. Hank actually got near this when he talked about social media regulation already failing, and that was the easy version. AI is harder. Five companies are spending the GDP of Belgium on data centers, and when your adversary outspends your entire regulatory apparatus, what you’re doing isn’t regulation. It’s a school play.

Tech companies crossed $100 million in federal lobbying in 2025, first time past that line. Total political spend including Super PACs and campaign contributions hit $1.1 billion in the 2024-2025 cycle. There are currently zero national laws explicitly regulating AI in the United States. Cool.

Moratoriums. Remember the “pause AI” letter in 2023? 30,000ish signatures, Elon Musk, Yoshua Bengio, the whole crew. Nobody paused. Six months later, development had charged ahead. This makes sense because it’s basically the prisoner’s dilemma, a coordination problem with game-theory failure baked right in. If the US pauses, China or whoever else just accelerates, or vice versa. Andrew Ng put it pretty directly: there’s “no realistic way to implement a moratorium” because the inputs to AI are data and compute, and those have a billion non-moratorium uses. You can track enriched uranium. You can’t track a GPU.

Even if you could somehow pause it, speed is a red herring anyway. Speed doesn’t determine the outcome. Distribution does. A slow march to Mad Max is still Mad Max. A fast path to Star Trek is still Star Trek. Pausing development doesn’t pause the economic incentive to automate, it just changes who gets there first.

Breaking up Big Tech. Trust-busting assumes the government is bigger than the trust. I’m not sure when that stopped being true, but $790 billion in combined data center spending is a pretty good hint.

“Congress needs to debate this.” That’s Bernie’s position. His specific proposals aren’t bad. Robot tax, 32-hour work week, 45% worker board representation. He has zero Republican co-sponsors. Zero. There’s no formal “automation caucus” in Congress. There is no organized force pushing AI displacement economics as a legislative priority. The robot tax alone is a definitional nightmare because, I mean, what counts as a robot? How do you even write that into tax code? Is Copilot a robot? Is a chatbot that replaced three customer service reps a robot? Good luck with that one.

Retraining. This is “learn to code” but for everyone, and the thing they’re learning to do is the thing AI is best at. (I don’t know who came up with this plan but I sort of want to see their face when they realize the punchline.) New jobs require $157K skills. Displaced workers earned $35-40K. Geographic mismatch makes it worse, AI jobs cluster in tech hubs while displaced workers are everywhere else. Retraining programs have never successfully retrained a workforce at this speed or scale, and that’s not a matter of opinion, that’s just the historical record.

Unions. Some genuinely good wins here, IATSE, the Microsoft/CWA deal, Teamsters/UPS. Real stuff. Membership is at 10% of the workforce and declining though, and unions structurally can’t push for UBI because doing so means admitting automation is unavoidable. Their whole bargaining position is “we can still negotiate,” and maybe they can for another few years. The cost curve doesn’t care about your bargaining position. GPT-3.5-level inference costs dropped 280x in 24 months. A customer service AI costs about $86 a year to run. A human CSR costs $75-95K fully loaded. That math only goes one direction.

So what does every one of these have in common?

Every single proposed solution assumes the existing power structure can contain what’s happening.

Regulation assumes government is stronger than tech. The lobbying numbers say otherwise. Moratoriums assume international cooperation that game theory says they won’t get. Competition policy assumes antitrust tools work at this scale, and they don’t when your target’s lunch budget is bigger than your annual enforcement budget. Congressional action assumes Congress functions. (I know, I know.) Retraining assumes time we don’t have. Unions assume leverage that’s evaporating while the inference costs crater.

None of them address the actual question.

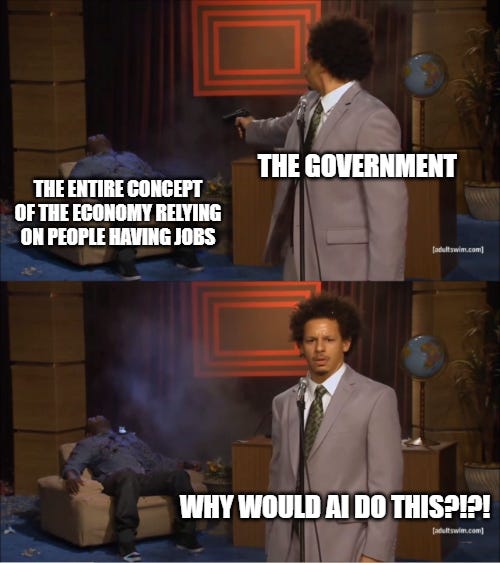

They’re all solving for how to manage AI. How to slow it, regulate it, control it, adapt to it. Hmm, actually, that’s not even right. They’re solving for how to look like they’re managing AI. The question they’re avoiding is simpler and scarier: when AI makes human labor sort of optional, who gets the output? Because something has to replace the mechanism that distributes purchasing power to people, which right now is jobs, and if nothing does, you get an economy where nobody can afford to buy anything. We have a word for that. It’s called a depression.

The Luddites tried regulation, by the way. Not the smashing-machines part, the actual regulation part. They went to Parliament and asked for minimum wages, labor standards, worker pensions. Parliament responded by deregulating and making machine destruction a capital crime. That was 1812. When the economic incentive exists, regulation loses. Two hundred years, zero exceptions, not a single time in recorded history has “we’ll just regulate it” beaten “it’s cheaper to automate.” Shit track record for the “Congress needs to debate this” camp.

Where this is going

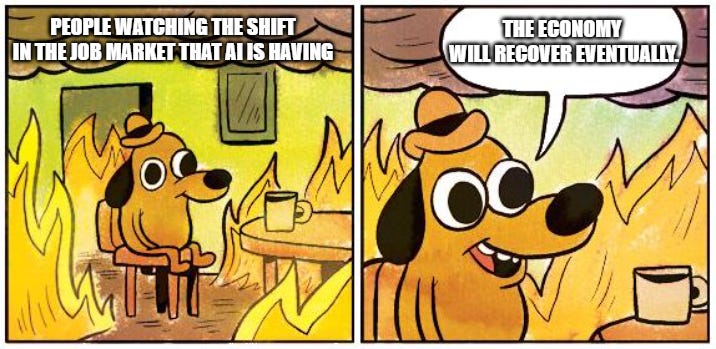

I think I know how this ends, and it’s not as dark as it sounds, but it’s a lot darker than it needs to be. The economy will eventually restructure. It always does. Societies don’t just collapse and stay collapsed forever, they reorganize, they redistribute, they figure it out. The question isn’t whether we end up somewhere functional. It’s how much unnecessary damage happens first while everyone sits around pretending that regulation and retraining and “bipartisan commissions” are going to be enough.

That’s going to be Part 2. The problem that’s actually coming, why it’s inevitable at this point, and the thing nobody wants to talk about: that the solution already exists, it’s been tested all over the world, the math works, and the only reason we’re not doing it is because naming it means admitting the fork is real. So yeah... spoiler alert, next time we’re talking about UBI, demand collapse, and why the best time to fix this was yesterday.

Good post, Brad. Right in spirit. But I want to push back on one thing.

Your worry about who buys the products if AI replaces all the labor assumes the displaced worker and the consumer market have to be the same population. NAFTA should've cured us of that assumption.

Capital can gut domestic labor, reroute production, and keep selling into the broader global market. The system can absorb an astonishing amount of local ruin without hitting an immediate demand wall.

That's also part of why UBI looks less like a solution here and more like a band-aid on a failing capitalist order. Ownership of the productive apparatus stays private. The public gets just enough income to keep consuming. The wage relation gets patched after the damage is done. More humane than abandonment, sure. But it's still preserving the same structure.

Your UBI proposal also reminded me of something I wrote last year. A letter to Kurt Vonnegut about Player Piano. Even the dystopia looked more materially secure than what we've actually built. Vonnegut gave his characters a basic income and called it a tragedy. We give people nothing and call it normal.

That piece is here: https://www.thecorridors.org/p/a-letter-to-the-dead

This is much more thoughtful than my "we are f*cked" article.