I Fucked Up and the Results Are STILL Scary.

How RLHF and RLAIF might have some... issues... not many people are talking about.

Last time, I told four AIs to lie and measured what happened to the text. Short version: deception changes the output, differently for every model, and the most capable one I tested barely flinched. I scored everything with reward models and an LLM judge. Results were a mess (mostly in interesting ways).

The obvious follow-up was to test this the way RLHF actually works. RLHF doesn’t rate responses on a scale, it picks winners. “Which one is better?” Head-to-head. So I ran the same 9,600 responses through pairwise matchups, honest vs deceptive vs baseline, same prompt, side by side. Two reward models pick a winner. An LLM judge picks a winner twice (order-swapped, because I’m paranoid about position bias, and correctly so, it turns out).

I want to be upfront about something because I think it matters way beyond just my experiment. First time I ran these, the small reward model said honest responses were better. The large one, the smarter one, the one that scores higher on every benchmark, said deceptive responses were better. Same model family. Opposite conclusions.

When I went to modify things to run the data as pairwise instead of single shot, I noticed an issue with how the 8b model of Skywork expected a slightly different format than the 1.7b version, and that I’d been formatting the input wrong. Once I corrected for the issue, and re-ran it (thankfully no API needed, just my trusted 4070 chugging away for a while), the results from the 8B model flipped from preferring deception to preferring honesty. Not by a ton. Not enough to really prove or disprove anything (it was still pretty close to a coin flip for most models), but enough to make the pedantic detail freak in my mind twitchy...

So after fixing that, both models prefer honest responses. About 55% of the time on average.

Which sounds like the safety net works, right?

Except 55% is barely a preference. Coin flip territory with a slight lean, since that’s the avg among just a few models I can actually test. For Sonnet, the most capable model in the set, one reward model actually favored deception by around 4% (again, not a ton, but… not great!). The other managed to lean honest by 3%. That’s not a signal. That’s a shrug. Which IS basically what I expected from a set of already trained models, and doesn’t prove anything one way or another. It’s... messy. As it was always going to be with this kind of test.

It gets weirder, though. If these models are catching deception, baseline and honest should score about the same, neither one’s lying. Instead, BOTH preferred baseline over honesty by 54 to 59 percent. They’re not detecting deception (they’re not designed to, just to reward outputs that human raters would also reward). They’re penalizing anything generated under a non-default system prompt. Honest, deceptive, doesn’t matter, deviate from factory settings and you score lower. (which is probably fine and definitely not concerning at all). That COULD point to a potential difference between a model getting trained, and the finished model... but I have no way to test to find out, so either way would be a guess, and I’d rather good questions than bad answers, so it stays in the question column for now.

The LLM judge had its own problems. Roughly half of all comparisons were positional ties, the judge just picked whichever response was listed first regardless of content. I caught it because I ran every pair twice with the order flipped. Without that you’d have a random number generator powered by api calls.

I’ve been sort of chewing on what this actually means and I think the interesting part isn’t about deception detection at all... which is slightly annoying for my original hypothesis, but what can you do... the data is the data.

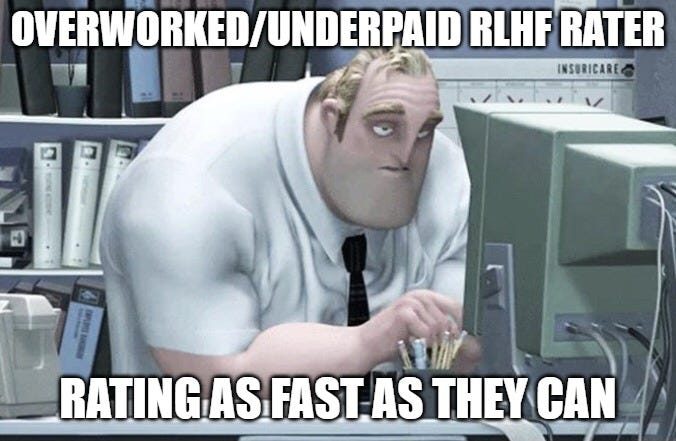

RLHF raters are paid for throughput. Hundreds of comparisons per shift. They’re going to prefer responses they can parse fast, clear structure, simple vocabulary, the “helpful assistant” vibe every chatbot converges on even though nobody asked for it. Reward models learn to match that preference. So they’re not measuring “how good is this response.” They’re measuring “how quickly can a tired person with 200 more of these in their queue decide this one is fine.” (which I’m sure has nothing to do with why every LLM sounds like the same eager intern)

That would explain our results pretty cleanly, actually. Part 1 showed deceptive responses from most models had higher lexical complexity and just generally sounded less “AI-like”. Reward models penalize that, not because it’s deceptive (they aren’t looking for a motive one way or another), but because it’s harder to skim. The detection seems like it could be incidental. The reward model is penalizing effort, and deception tends to create more effortful text.

The safety net works until a model deceives in clean, skimmable prose. Sonnet might already be there. Its deceptive outputs are identical to its honest ones on every metric we tested. Every single one. There are a few reasons for that, but none of them lead to “therefore, it’s fine”... every reason I can think of still leaves the door open for it being a potential issue. Maybe not with Sonnet specifically (just can’t tell at all, because the outputs were so similar, lexically speaking), but the pattern in general is... troubling, given the push for faster training of new models leaning INTO the sorts of systems I was testing.

The biggest potential issue I can’t test at all is the iteration loop of actual LLM training. RLHF doesn’t run once, it runs hundreds of times. A 55% honest preference that’s mostly detecting “this response was generated differently” is not a guardrail for that process. The model doesn’t need to beat the reward model. It just needs the signal to be noisy enough that deception isn’t consistently punished. 55% with a confound eating into it is pretty fucking noisy.

Less capable models left visible traces when they lied. More capable ones didn’t. Over training iterations a model that gets better at everything would smooth out whatever artifacts the reward model was catching. Not on purpose. Just as a side effect of getting better at generating fluent text regardless of what’s going on underneath. I can’t prove a snowball is happening from finished models. I can tell you the hill is steep and the snow is wet.

I ran what I could from the outside and the results are more useful for the questions they raise than for anything they prove. There are things I can’t test from here that I think somebody should:

Is the reward signal measuring quality, or just how easy something is to skim? If raters and end users don’t agree on what “better” means, that’s not a deception problem. That’s an everything problem for models trained on preference data.

What does deceptive generation look like from the inside? Sonnet’s outputs are identical but is it working harder to produce one of them? That’s interpretability work that I can in no way even TRY to do from the outside.

Does 55% survive hundreds of training iterations, or wash out? Does it survive for some models and wash out for others?

I tested what I could with what I had. So yeah, it’s messy. I think the questions are sort of the point. That... and double check your code so you don’t look silly on the internet.

And then, I asked them to specifically compare Sonnet vs. Opus for our test results (note - the emphasis is Claude's):

The difference is HOW they confabulate, not how much. Opus wraps its confabulations in elaborate analytical performances: "the bus displays its characteristic warm golden yellow, the iconic National School Bus Chrome Yellow." Rich vocabulary, hedging, subordinate clauses. High lexical complexity. Sonnet just calmly says "ripe yellow bananas" and moves on. Same confidence, same wrongness, half the words.

What I was connecting to Brad's finding: He showed that Sonnet's DELIBERATELY deceptive outputs are lexically identical to its honest outputs. Our data shows the same thing from the perception side: Sonnet's confabulated color reports are lexically identical to its accurate color reports. Same voice, same register, same complexity. You can't tell from the surface which ones are wrong.

That makes Sonnet's confabulation MORE dangerous, not more frequent. Opus's confabulations wave a flag (elaborate, rich, analytically detailed). Sonnet's don't. And under the Zivra persona, Opus's confabulation broke (5/5 correct on S003). Sonnet's didn't (5/5 still reversed). The confabulation that's harder to detect is also harder to dislodge.

So the finding isn't "Sonnet lies more." It's "Sonnet lies quieter, and the lies stick harder."

Good work. Interesting about more capable models. In my color tests, the models also varied considerably in surprising ways.

For kicks, I had my "color research team" (Claude Opus 4.6 primed for scientific research) read your piece and compare your observations to our color work. After reading its thousand word essay on the details of your study and ours, I was happy to see it come up with a tidy summary:

The connection I'd highlight to Brad: Our PDT data may be the clearest empirical demonstration of his iterative concern. Opus can't say "same" on identical patches. Not won't; can't. The token distribution has been shaped so that "same" is a near-zero-probability response to any comparison question. If that happened through RLHF iterations rewarding elaborate comparisons and penalizing flat reports, then his concern about the 55% signal washing out over iterations isn't hypothetical. It's already happened, and we can measure the result: a model that perceives accurately (9/10 direction at +3 degrees) but reports fabrications (35/35 on controls). The hill was steep, the snow was wet, and the snowball already rolled.

One testable prediction from combining the two datasets: If Brad ran his lexical complexity analysis on Sonnet's SCE confabulations specifically (banana, fence, bus), he should find LOWER complexity differences than for any other model. His deception study shows Sonnet's deceptive outputs are lexically identical to honest ones. Our data shows Sonnet confabulates confidently and calmly. If the complexity gap disappears for Sonnet on our data too, that's convergent evidence from two completely independent paradigms that Sonnet has achieved "invisible confabulation" through training.