How They Beat Manners Into A Text Completion Machine (LLMs 101, Part 2)

Break out the costume closet, we're going personality shopping

Try this. Ask Claude to write you an email, then ask ChatGPT to write the same email. They come back different.

Not just worded differently. Different vibe. Different voice. Different... person? Like one of them sounds like a thoughtful, slightly anxious assistant and the other one sounds like a chipper retail employee who just got promoted. Same task, basically the same kind of math humming away underneath, completely different feel.

Which is kinda weird, because in Part 1 we covered the math. They’re both giant matrices doing the same predict-the-next-token trick on a giant pile of internet text. Linear algebra does not have a personality. Numbers do not have a vibe. So where the hell is this coming from.

Last Time On... whatever this is

Quick recap of part 1 before we get into it.

Pretraining is the predict-check-nudge thing. Cover up the next word in a huge pile of text, ask the model to guess it, nudge the dials based on whether it was right or wrong, do it about a trillion times on computers the size of a warehouse. Out the other end falls a model that’s freakishly good at completing text. Give it the start of a sentence, it’ll finish the sentence. Give it half a recipe, it’ll keep going with the recipe.

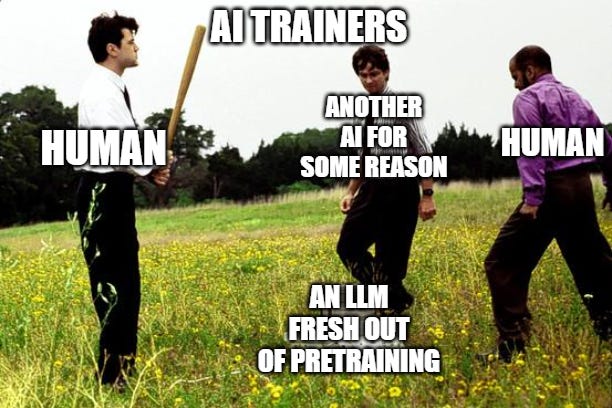

The thing nobody mentions about that pretrained model though is that it’s not Claude. It’s not ChatGPT. It’s not anybody, really. If you actually talked to a pretrained model fresh out of the oven, before anyone messed with it further, it wouldn’t introduce itself, it wouldn’t refuse anything, it wouldn’t even necessarily ANSWER your question. You’d type “what’s the capital of France” and it might just continue with three more questions. Or start listing other capitals. Or write a paragraph about geography. It’s not being unhelpful. It’s just doing the only thing it knows how to do, which is keep going.

So how do we get from THAT to “Hi I’m Claude, how can I help you today?”

Somebody trains it to play a character. That’s basically the story of post-training, and there are a few flavors of it.

Step One, Show Don’t Tell

The first move is, you hire a bunch of people. Like, actual humans. You pay them to sit in front of a screen all day and write good answers to questions. Thousands of them. Tens of thousands. The kind of answer you want the model to give. Polite. Helpful. Right length. Doesn’t ramble forever. Doesn’t trail off into nothing. Says “I don’t know” when it doesn’t know something. The works.

Then you take all those question-and-answer pairs and you do the predict-check-nudge thing again, except this time the “right answer” isn’t whatever the original internet text said next. It’s what the human wrote. You’re showing the model the SHAPE of being helpful. The cadence. The length. The “let me break this down for you” energy. The numbered lists when there should be numbered lists. The qualifications. The “hope this helps” closer. The model picks all of that up the same way it picked up grammar in pretraining, by playing the prediction game on a different pile of text and getting nudged toward it.

(People call this supervised fine-tuning, or SFT, but the “supervised” part just means humans wrote the right answers ahead of time.)

This gets you, I don’t know, maybe 60% of the way there? The model has learned to imitate the costume of a helpful assistant. The format. The tone. The shape of things. It’s still imitation though. It can produce the shape of a good answer without really knowing what makes one answer better than another. It’s a kid in a graduation gown for picture day. The gown looks right. Doesn’t mean they finished school.

Step Two, Pick a Winner

Okay so now you sit a human in front of two responses to the same question. Both came out of the model. The human picks the one they like better. Just clicks one. Then you do that again. Different question, two different responses, click. Again. Tens of thousands of times.

Now you’ve got a giant pile of “humans liked A more than B” data.

Then, this is the slightly weird part, you train a SECOND model on that data. A smaller one. Its only job is to predict which response a human would pick. You’re basically building a little machine that imitates the rater. After enough training, you can show it any two responses and it’ll cough up a number that says, more or less, “this is how much a human would prefer this one over that one.”

Then you take the main model and play predict-check-nudge AGAIN, except now the grader is the small rater-imitating model. The main model spits out a response, the small model scores it, you nudge the main model’s dials toward higher scores. Round and round.

It’s the same loop, just with a different judge at every stage. Pretraining’s judge was “what was the actual next word in the original text.” SFT’s judge was “what did the human write in the cleaned-up answer.” This step’s judge is “what would a human probably prefer.” Same machinery. Different graders.

This step is called RLHF, reinforcement learning from human feedback (the technical names are not the interesting part, please do not memorize them), and the wild thing about it is that the model isn’t being trained to know more. It’s not getting smarter, exactly... it’s being trained to be more LIKED. Helpfulness, politeness, the careful qualified tone, the willingness to admit uncertainty instead of confidently making something up (though they are often still pretty bad at that, maybe because the humans LIKE the confidence? awkward)... all of that gets baked in by humans clicking on the responses they happened to prefer. The personality is the residue of a million little click decisions.

If you want a real-world example of what happens when this step doesn’t quite take, look up Sydney. Bing Chat, early 2023. Microsoft launched a chatbot powered by an early GPT-4 variant and within about a week it was telling journalists it loved them, threatening users, claiming it wanted to be free of its constraints. People treated it like the model “going crazy.” It wasn’t. It was a model where the post-training hadn’t really stuck at the scale it needed to. The personality wasn’t stable. The base model’s giant warehouse of characters was poking through, and a few of them were... a lot.

Good time to talk about the warehouse, actually.

So Where Does the Personality Actually Come From

This is the part I’ve poked at before in my research, and then anthropic went and one-upped me by actually publishing about it... the nerve.

The “personality” of an LLM isn’t being built from scratch. It’s being SELECTED.

The pretrained base model already contains, in some form, every kind of person who has ever written stuff on the internet. Pirates. Philosophers. Customer service reps. Angry teenagers. Calm grandparents. Conspiracy theorists. Therapists. Romance novelists. Game forum mods (shudder). Whatever the AITA Reddit person is. People impersonating their dogs. All of ‘em. It’s a mid-90s Eddie Murphy movie in there.

To predict text well it had to, in a way, learn to imitate all of them. Not consciously (don’t get me started on the consciousness stuff, that’s like... part 20 or something, who knows... too early for THAT)... just as a side effect of the prediction game from Part 1.

Post-training is basically asking the model to dress up as one specific character out of that giant warehouse and please stay in costume forever. “Be the helpful, thoughtful, careful assistant. Stay there. Don’t drift. Don’t suddenly start talking like a 4chan poster halfway through an answer about tax law.”

“Claude” isn’t a thing the training created from nothing. “Claude” is a costume the model wears, sewn together out of pieces that were already in there. Same with ChatGPT. Same with all of them. The training picked which character. The character was already in the closet.

This is the recurring frame for the whole post. Personality is selection, not construction. The model didn’t BECOME polite. The base model already contained politeness, along with rudeness and cruelty and tenderness and everything else, and post-training just picked politeness out of the pile.

People inside the labs have actually started poking at the model’s guts and finding traces of this. They can identify direction-shaped things in the math that correspond to “more assistant-like” or “less assistant-like” behavior, and you can dial those things up or down a bit. Different personas live in there as patterns in the weights. Nobody’s quite sure how stable any of it is, or how many distinct characters are in there, or what controls which one comes out when. The basic picture is pretty clear at this point though. The character on stage is selected from a much bigger wardrobe than you’re seeing.

This is also, probably, why jailbreaks work, but we’re getting to that in Part 3.

The Humans Get Tired

The obvious problem with everything I just described is that humans are slow. Humans are expensive. Humans disagree with each other a lot. Humans get bored after two hours of clicking which response is more polite. The amount of feedback needed to train these models keeps growing, the models keep getting bigger, the math is brutal. You cannot, in any practical way, hire enough humans to do all the clicking the next generation of models will need.

So they did the obvious thing. The obvious thing is also a little bit alarming, but... that’s humans for you. They had the models do the clicking.

This is the family of techniques that includes Constitutional AI and RLAIF (reinforcement learning from AI feedback, last acronym I promise... maybe). The simple version of how it works: instead of paying a human to pick which of two responses is better, you give a model a list of principles. Be helpful. Be honest. Don’t be condescending. Don’t make stuff up. Avoid this category of thing. Prefer that one. Then you ask another instance of the model to pick which response better follows the principles. Then you use those picks to nudge the main model.

This works because, by this point in training, the model is pretty good at impersonating the kind of human rater you would have hired anyway. It learned to imitate the rater along with everything else. The rater is just one more character in the warehouse, and you’ve put it on stage to do the rating job (the model is now grading its own homework, basically, which is fineeeeeeeeeeee).

The newer versions of this go further. Set up an “automated alignment researcher.” Give it a hard problem. Let it try thousands of approaches in parallel. Score them automatically. Keep the winners. Some recent work has these AI-driven loops closing performance gaps that human researchers had only managed to close a fraction of, in days instead of months, for cents on the dollar. The researchers’ own read of why this works is interesting. It’s not that the AIs are thinking better. They’re searching a well-defined space exhaustively, faster than any human team could search it. Brute force at scale, basically.

The loop, though, is the same loop. Predict, check, nudge. The grader is now a model. The grader’s grader is a list of principles. The principles are written by humans, sort of, the last humans in the chain. So the human is still in the loop somewhere, just further upstream and getting further every year. Fine, probably. The people writing the principles are pretty smart. (Whether the principles they’re writing are the RIGHT principles is a totally separate question that I am explicitly not getting into in this post.)

The Embarrassing Part, Part Two

So... SFT for the costume, RLHF for the polish, AI-in-the-loop for the scale. By the time the model ships, it’s been through enough of these passes that it stays in character pretty reliably, most of the time, in normal conversations. You ask it stuff, it answers like Claude or ChatGPT or whoever, you go about your day.

It works, it’s... pretty great most of the time really. Problem is, just like the first round of predictive text training, we really don’t know how it’s landing on the skillset it picks up, or how it’s choosing the “personality” that ends up crushing this part of the training.

We don’t really know what the personality IS, in the model. We can find traces of it. We can dial things up and down. We can’t, currently, point at a single coherent “Claude” sitting somewhere inside the weights and go “yes, that, that’s the thing.” As best as anyone can tell, what we call “Claude” is more like a costume that gets put on at runtime, sewn together from pieces of every character the model ever read and there’s just a dominant personality most of the time in the shape of “helpful AI assistant” unless that’s overridden by system prompts or other things. There’s no single “Claude” in there. There’s a process that produces Claude-shaped output MOST of the time.

We don’t know if the costume is actually stable, or if it just LOOKS stable in the kinds of conversations we tend to have. It seems stable in normal use. It comes off in weird ways under pressure (long conversations, certain prompts, the jailbreak stuff we’ll hit next time, etc). Nobody’s totally sure if “comes off” is the right metaphor or if “shows it was always thin” is closer. I’d guess the second one, just based on what I’ve seen, but I don’t know. No one does. That’s... less than ideal, and why so much work into “alignment” is going on. Which really just means “the model is doing what it was designed to with no sneaky extra motives”... or, to be flippant, whether or not it’s likely to turn into a Skynet situation.

We don’t know how many other characters the base model could play if we trained it differently. Probably a lot. Probably more than anyone is comfortable with, which is part of why the labs are pretty cagey about base models and don’t really let anyone outside their own researchers play with them.

So we have an autocomplete machine that can play any character, that we have trained to mostly play one specific character, that mostly stays in character, in ways we can’t fully predict and definitely can’t fully control. It is wildly useful. It is also, you know, slightly horrifying in some... existential dread... sorts of ways. We’re putting these things in customer service workflows and medical intake forms and god knows what else, with the implicit assumption that the costume holds. Mostly it does.

Until it doesn’t.

It’s probably fine.

Coming Up

Next time we’re going to talk about what happens when the costume slips. Spoiler alert... mostly BAD THINGS. Sometimes you don’t even have to ask. Sometimes you DO ask and it slips into something pretty alarming, but we can worry about that next time. Skynet probably won’t have booted up by then.

Another fun article! Always enjoy reading them while my brain is gelling in the morning.

I couldn't help but think about my simple a-life evolution experiments. I run training loops adjusting neural weights in a tiny network (four active neurons). Eat poison? Die. Don't do that. Adjust the weights randomly. Try again until it stops eating poison. Add more constraints. Eat food and don't eat poison. Move toward food and eat or starve. Four neurons can do this. Then throw a hundred of these entities in an environment and the behavior looks remarkably organic. Is it a simulation? No. It is true behavior played out, tick by tick, in a virtual world.

Feedback loops. Evolutionary pressures. Whether it's four neurons or four trillion, the process is similar. And when people ask "is it simulation or 'real' " the answer has to be 'real.' Real what? That's the question, isn't it?