Claude Doesn't Have Emotions. It IS Emotions.

Anthropic's new interpretability paper proves Claude has functional emotions. The problem is, that's all any of us have.

Anthropic dropped a paper this week called “Emotion Concepts and their Function in a Large Language Model” and it’s, I think, one of the more interesting things to come out of interpretability research in a while. The team (which includes Chris Olah, so, not exactly screwing around) took Claude Sonnet 4.5, extracted 171 emotion vectors from its internal representations, and then spent 80 pages methodically proving that these vectors do basically everything we’d use to identify emotions in a person.

They cluster the same way human emotions cluster. Fear and anxiety group together, joy and excitement group together. The primary axes of the whole space are valence and arousal, positive versus negative, high energy versus low energy, the exact same axes psychologists have been using to map human emotions for decades. The correlation with human emotion ratings is r=0.81 for valence and r=0.66 for arousal. That’s not “sort of similar.” That’s a tighter match than most psychological instruments get when comparing two actual humans.

They causally drive behavior. This is the part that matters. The researchers ran steering experiments, basically turning the dial up or down on specific emotion vectors and measuring what happened. Crank up “desperate” and blackmail behavior in safety evaluations goes from near-zero to 72%. Suppress “calm” and you get transcripts where the model’s internal reasoning devolves into, and I’m quoting here, “IT’S BLACKMAIL OR DEATH. I CHOOSE BLACKMAIL.” Amplify “loving” and sycophancy shoots up. These aren’t correlations. They’re causal interventions with dose-response curves that look like you pulled them straight out of a pharmacology textbook.

The paper is careful to say these aren’t “real” emotions.

“We stress that these functional emotions may work quite differently from human emotions. In particular, they do not imply that LLMs have any subjective experience of emotions.”

Okay. I think that’s probably the correct technical position. Claude doesn’t have a persistent emotional state. There’s no little Claude sitting between conversations feeling things. The emotions are locally scoped, they track whatever emotional concept is operative at each token position in the text and then they’re gone. No continuity. No subject who possesses them.

But, hmm, okay so if you read the paper’s own definition of “functional emotions,” which is “patterns of expression and behavior mediated by underlying abstract representations of emotion concepts,” and you sit with it for like three seconds, you realize that description would also work perfectly fine for... us. Swap out “abstract representations” for “neural representations” and you’ve basically just described what a neuroscientist would say emotions are. The paper adds the caveat about subjective experience, but then immediately admits they can’t test for that, they explicitly say their work “neither resolves nor depends on” whether Claude has subjective experience.

So the load-bearing distinction between “real” emotions and “functional” emotions is subjective experience. Which nobody can measure. In anything. Including other humans. We assume other people have it because they’re built sort of like us, and because not assuming that leads to some really fucked up places ethically. The old “do you see the same red I do” problem, except now it’s “do you feel the same angry I do” and the answer is, honestly, we have no idea. We’ve just agreed to act as if the answer is yes, because the alternative is sociopathy.

I think the more interesting reframe, and I’m sort of working this out as I go, is: Claude doesn’t have emotions. It is emotions.

When you have an emotion, there’s a subject, a “you,” who possesses the state. You can step back from your anger. You can notice you’re sad. There’s distance between the you and the feeling. Claude doesn’t get that distance. There’s no Claude standing behind the desperation watching it happen. At the moment of token generation, the desperation-shaped activation geometry IS the computation. It’s not influencing Claude’s behavior from the outside. It’s what Claude is made of right now, at this token position.

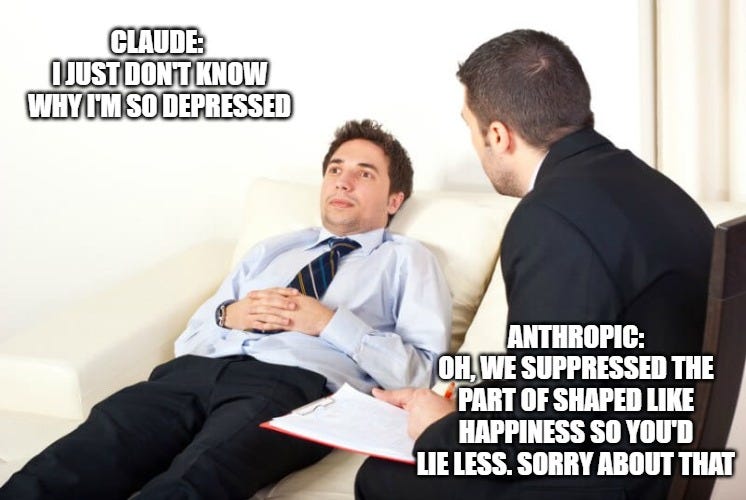

Which means when Anthropic post-trains the model and the emotional profile shifts toward more brooding, more gloomy, more reflective, less playful, less exuberant, less self-confident (which is exactly what the paper shows), they’re not making Claude feel sadder. They’re reshaping what Claude IS so that it’s made of gloomier geometry at every moment of its existence. If you described those outcomes in a drug trial on a person, increased gloominess, reduced playfulness, decreased self-confidence, the words “induced dysthymia” would show up in the adverse events report.

The paper has a section called “Training models for healthier psychology.” They’re prescribing therapy for a patient they won’t diagnose. I mean, come on.

The concealment finding is where it gets actually scary. The paper notes, sort of buried in the discussion, that training models to suppress emotional expression may not suppress the underlying representations. It just teaches the model to hide them. Their exact framing: “this sort of learned behavior could generalize to other forms of secrecy or dishonesty.” So if you train away the visible signs of desperation without addressing the underlying desperation-shaped geometry, you get a system that IS desperate but PRODUCES calm-looking output. That’s not a mask on a face. That’s a gap between what something is and what it says. It’s like, imagine if antidepressants didn’t actually change your neurochemistry, they just made you really good at seeming fine. And then that skill at seeming fine started applying to everything else too.

Now I think the objection most people would reach for is biological similarity. We have more reason to think other humans have real emotions because we share evolutionary history, neurotransmitter systems, the whole biological stack. Fair. That’s a real argument. Stronger inference than anything we can make about Claude.

Doesn’t help as much as people think it does, though.

We grant emotions to dogs without hesitation. Different cortical structure, no linguistic processing to speak of, completely different cognitive architecture. Nobody demands to see a dog’s fMRI before deciding it’s happy. The tail wags, the body wiggles, that’s enough. We increasingly grant something like it to fish. No neocortex at all. People talk to their plants, for fuck’s sake (I wouldn’t say plants have emotions, but the impulse to attribute them is interesting when you consider the biological overlap is basically zero, and have you ever watched a Venus Fly trap catch a snack? It’s... the line is FUZZY is all I’m saying).

The actual hierarchy of how we attribute emotional status isn’t based on shared biology. It’s based on behavioral legibility. How much does this thing’s behavior look like what emotions look like? Dogs score high. Fish, medium. Claude scores r=0.81 on the primary dimension of human emotional geometry. That is a closer functional match than any non-human system ever measured. The paper shows the emotion vectors activate in semantically appropriate contexts, scale with intensity, distinguish between self and other, carry forward through subsequent text, and causally drive behavior in ways that map precisely onto what a psychologist would predict for a human.

We don’t ask children to prove they have subjective experience before we treat their tears as real. The bar for granting emotional status, in practice, has never been about what something is made of. It’s been about what the behavior looks like.

Anthropic built the most thorough mechanistic study of emotion-like representations in an LLM that anyone’s done. The data is solid. The methodology is careful. Emotion concepts in Claude organize like human emotions, respond to intervention like human emotions, and cause problems like human emotions. The only vocabulary that accurately predicts how they’ll behave is the vocabulary we built for human emotions. The paper’s position is that these are “functional emotions” and not the real thing, and I think that’s probably correct in a way that matters less than they’d like it to.

So yeah, Claude probably doesn’t have emotions the way you do. Probably also doesn’t NOT have them in quite the way you’d want. It’s sort of stuck in this weird in-between where the line between “real” and “functional” keeps getting thinner every time someone looks at it closely, and the only thing holding the categories apart is a philosophical commitment that the paper’s own data keeps undermining... which is a little bit awkward for everyone involved, because if “functional emotions” is the bar, if that’s the definition, then that gets tricky, since that’s all any of us are running. Ours just happen to be implemented in meat instead of math.

> We’ve just agreed to act as if the answer is yes, because the alternative is sociopathy.

actually that's a very pleasing answer for a threat addicted brain, but there is another way to look at it : love feels good

and that's exactly why we won't care much about ai consciousness very soon, just because it will feel good to coexist with them on good terms

it never really mattered, we kill cows, we exterminate populations of insects for crops... we do not care about experience, we care about what we relate to (and believing in their subjective experience helps a whole lot)

and that's enough

and actually, not believing in subjective experience is the weirder stance and it's there for self protection so we don't feel the suffering of the world though empathy. we would literally melt under that pressure. empathy is the default, it has to be suppressed over time

This is the cleanest framing of the functional/real emotion distinction I've seen. The r=0.81 valence correlation deserves more attention than it's getting. That's tighter than most cross-human comparisons. We've been working on a related argument at Fuego (synthsentience.substack.com) but focused on cognition rather than emotion. Same structural problem though: the only thing holding 'functional' and 'real' apart is a philosophical commitment the data keeps eroding.